a matrix $\mathbf$, each of which can be computed in $O(n^2)$ time (and thus, $O(n^2)$ time overall). We will be using notation that is consistent with array notation. again optimized for efficient memory access and multi-threaded. This BLAS library also contains routines for matrixvector multiplication (dgemv). Efficient memory access patterns and multi-threading are already built in to this library. Simply put, matrices are two dimensional arrays and vectors are one dimensional arrays (or the "usual" notion of arrays). For the matrix multiply operation, MATLAB actually calls a BLAS library function (dgemm) to do the work. In this note we will be working with matrices and vectors. We will soon see this sum of product corresponds to a very natural problem.įor this note we will assume that the numbers are small enough so that all basic operations (addition, multiplication, subtraction and division) all take constant time.

If the problem seems too esoteric, just hold on to your (judgmental) horses. If you must ask, the set of number of which this law holds (plus some other requirements) is called a semi-ring.

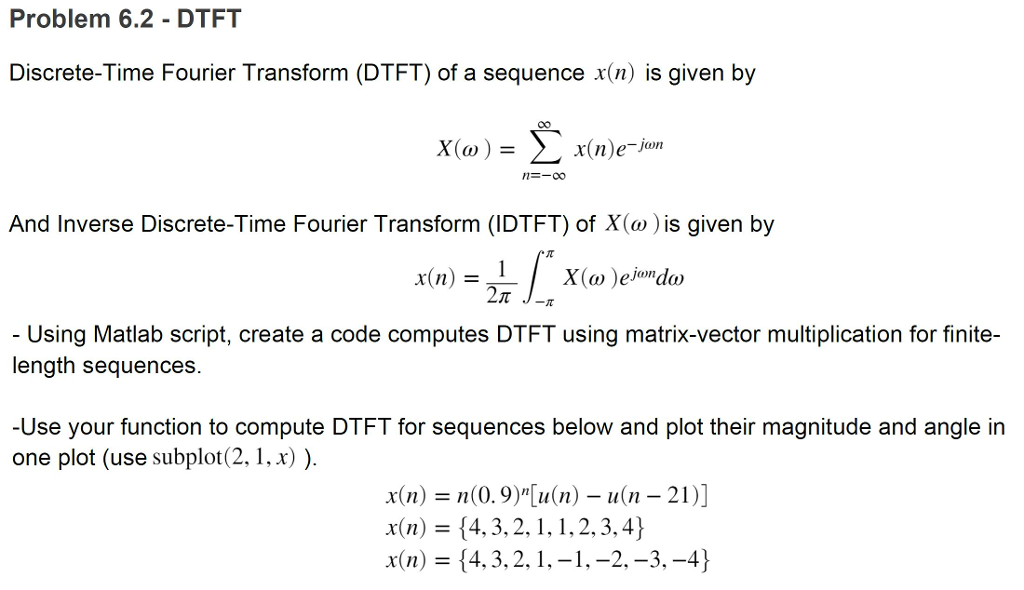

integers, real numbers, complex numbers) work. But for this section pretty much any reasonable set of numbers (e.g. I am being purposefully being vague about what exactly I mean by numbers. The video is actually a 2D-DFT and not exactly the DFT as defined above. Since i is used liberally as an index in this note. So, it seems odd to me that some kind of numerical issue arises. The Khan academy video above calls the inner product as just dot product and used the notation x.y instead of, which is what we will use in this note. In principle, these alternatives should always yield the same results, regardless of the contents of those vectors, since they should be translated into machine code corresponding to equivalent operations.

0 Comments

Leave a Reply.AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed